29 July 2022: Database Analysis

A Novel Deep Learning Model to Distinguish Malignant Versus Benign Solid Lung Nodules

Shuwen Wang1ABCDEF, Leilei Zhou1CD, Xiaoran Li2BD, Jie Tang3BD, Jing Wu1AB, Xindao Yin1CDFG, Yu-Chen Chen1ACDEFG*, Lingquan Lu1ACDEFGDOI: 10.12659/MSM.936830

Med Sci Monit 2022; 28:e936830

Abstract

BACKGROUND: In this study we aimed to establish a new transfer learning model based on noncontrast and thin-layer computed tomography (CT) scans to distinguish between malignant and benign solid lung nodules.

MATERIAL AND METHODS: CT images from 202 patients with 210 lesions (malignant: 127, benign: 83) manifesting as solid lung nodules from January 2016 to December 2020 from 3 institutions were retrospectively collected, and each nodule was histopathologically confirmed. Two experienced thoracic radiologists reviewed all images and determined the regions of interest (ROIs) in the three-dimensional (3D) images layer-by-layer. We divided the lesions and images into training and testing sets at a ratio of 7: 3. The Inception V3 model was pretrained by the training dataset. Five-fold cross-validation was used to choose the optimal model. Receiver operator characteristic curves (ROC curves) for methods to evaluate the performance of the models were drafted.

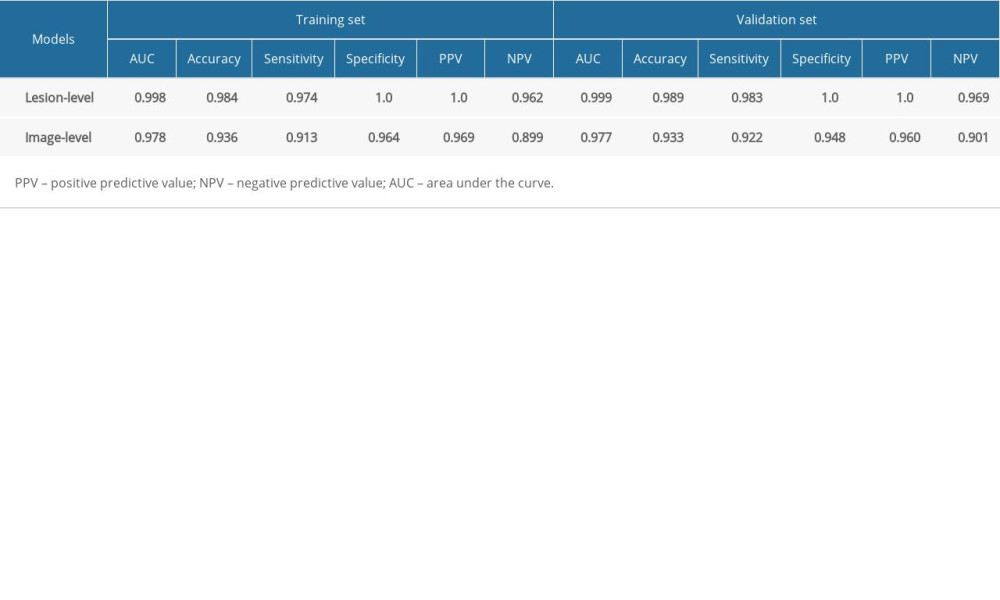

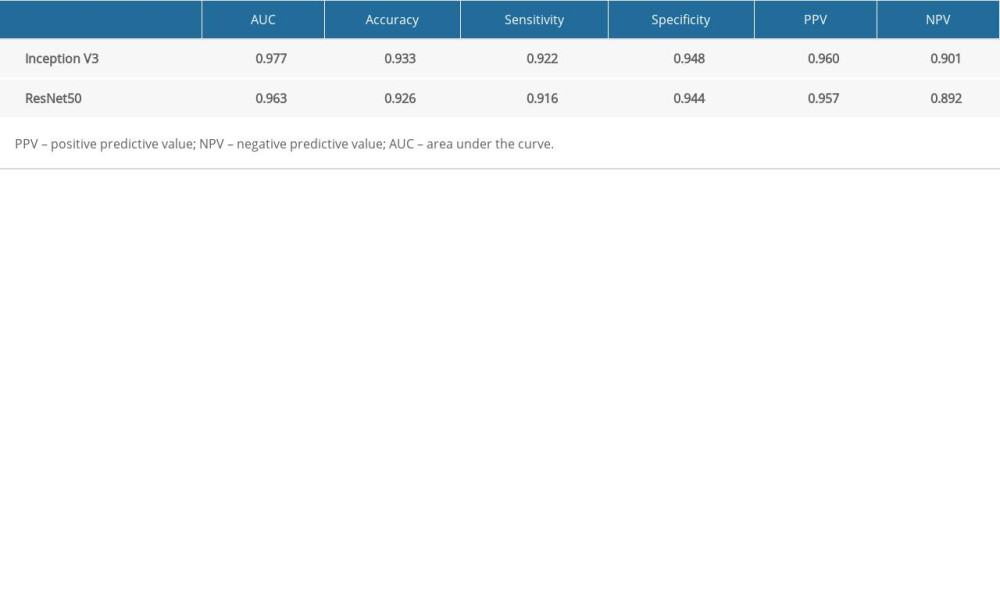

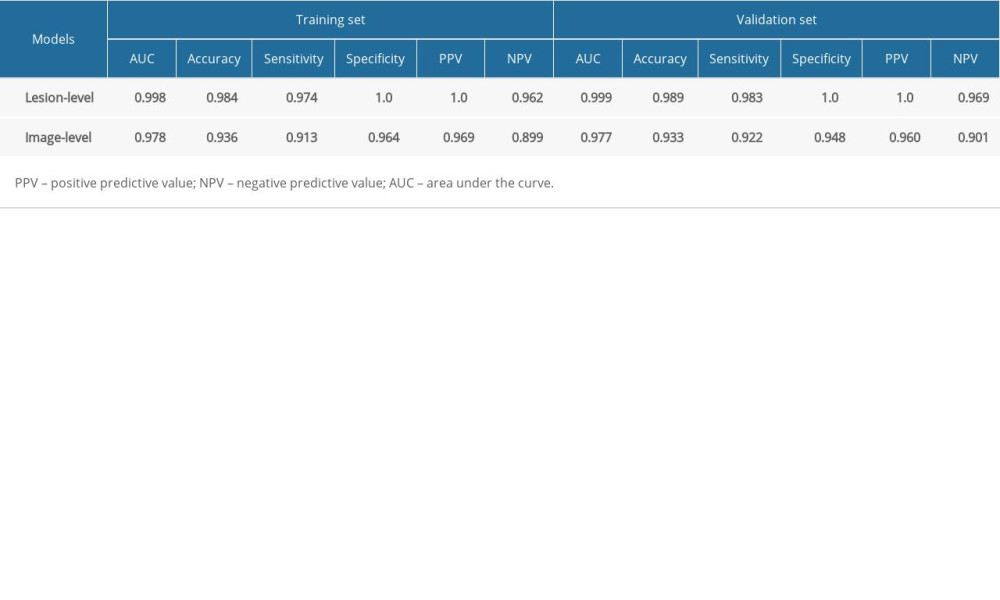

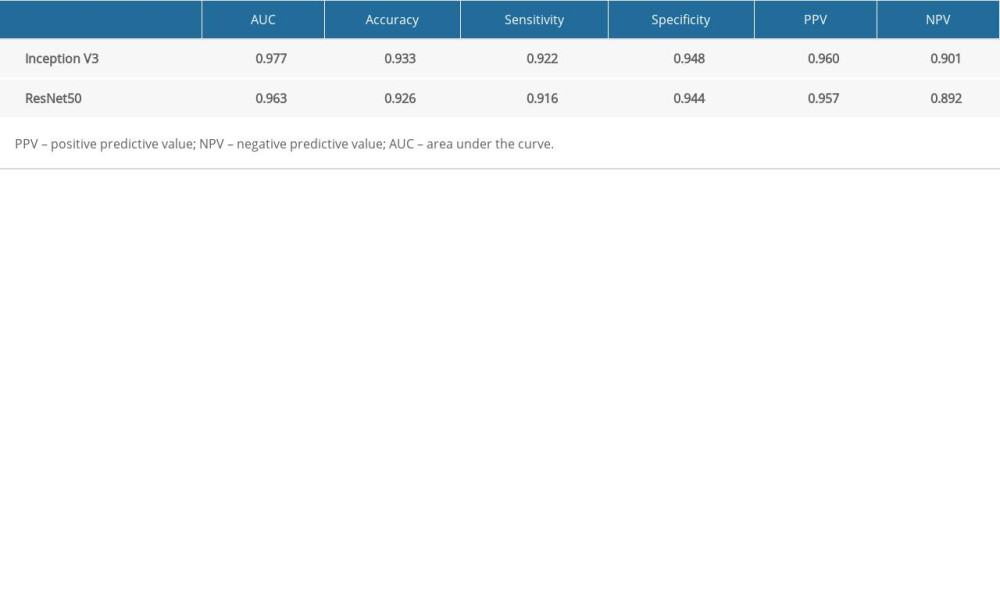

RESULTS: In the validation set, the AUC, accuracy, sensitivity, and specificity of Inception V3 model (lesion-level) were 0.999, 0.989, 0.983, and 1.0, respectively, which is higher than the image-level (0.997, 0.933, 0.922, and 0.948, respectively). The Inception V3 model (lesion-level) performed better than the image-level but there was no significant difference between the models (P>0.05). The ResNet50 model based on image level achieved AUC, accuracy, sensitivity, and specificity of 0.963, 0.926, 0.916, and 0.944, respectively, which is lower than that of Inception V3.

CONCLUSIONS: Our study developed a novel deep learning model based on noncontrast and thin-layer CT scans to classify benign vs malignant lung nodules, and the Inception V3 model greatly improved the differentiation accuracy and specificity.

Keywords: Deep Learning, Neural Networks, Computer, solitary pulmonary nodule, Humans, Lung Neoplasms, Tomography, X-Ray Computed

Background

Lung cancer has long been a leading cause of death. Early and accurate diagnosis plays an important role in survival since only 15% of lung cancers are diagnosed at an early stage [1,2]. CT is widely used as a tool for physical examinations due to its high performance in lung nodule screening. According to the Lung CT Screening Reporting and Data System (Lung RADs version 1.1) [3], solid nodules scoring more than 4 points, which means the size is more than 8 mm, are suspicious for malignancy, and additional diagnostic testing is recommend. Nodule management guidelines [4] also recommend that the follow-up interval is a range rather than a precise time point. Apart from size, radiologists also make the propensity diagnosis relying on radiographic features such as spiculation, lobulation, and pleural reaction, among others. However, overdiagnosis and a high false-positive rate (FPR) remain [5,6] due to the misclassification of these lesions, which is related to their similar appearance on CT images and scan parameters in terms of slice thickness and contrast enhancement of various structures within the image [7,8]. Therefore, clinicians still rely on histological testing, which is an invasive procedure, as the criterion standard, and this sometimes results in technical challenges or complications.

Radiomics and deep learning (DL) models have been widely proposed and developed for application in medical fields. The former consists of a series of processes, including lesion segmentation, radiomics feature extraction and selection, and model establishment and evaluation. Hawkins et al [9] extracted 23 features for predicting malignant nodules (AUC=0.83). Chen et al [10] obtained an accuracy of 0.84 in nodule classification by developing a support vector machine (SVM) algorithm using 72 cases. Unlike radiomics, deep learning classifiers contain training, testing, and performance evaluation [11], and there are many techniques used, such as convolutional neural networks (CNNs) [12], unsupervised learning [13], and transfer learning [14]. Transfer learning allows for pretraining on a very large dataset of images and then tuning the resulting model using specific samples, which is useful for classification to train a stable, unbiased, and non-overfitting deep learning architecture from the very beginning [15]. Inception and ResNet models are the most frequently used for medical studies, such as the Inception V3 model, which performed well in pathological classification of NSCLC [16], breast cancer [17], and knee injury for MRI images [18] and the ResNet50 model, which is widely applied in diagnosis o9f brain diseases [19], classification of skin lesions [20], and coronary artery calcium detection [21].

However, deep learning algorithms require intensive computational training and more expertise for tuning. To achieve better performance, many investigators have attempted to optimize the models by adjusting the input images. For instance, a multi-CNN model [22] reached an accuracy of 0.87 for binary nodule classification based on multiple down-sampling. Juan et al [23] proposed use of ML-xResNet to classify different types of lung nodule malignancies and achieved 92.19% accuracy. Nasrullah et al [24] obtained a sensitivity of 94% when combining ML-xResNet with clinical factors. A 3D fully-convolutional neural network for reduction of false-positive rate in lung nodule classification was created as well [25]. Additionally, there is still room for improvements in the performance of image acquisition parameters. Some researchers have proven that radiomic features are most affected by varying acquisition parameters [26–28]. Dilger et al [29] improved pulmonary nodule classification by combining the radiomics of intra- and perinodular regions (AUC=0.80). He et al [28] showed that thin-slice and noncontrast CT can provide more accurate information on radiomics features. Dou et al [30] showed that combining the radiomics of perinodular regions can be used to predict lymph node metastasis. Many investigators [31–33] have attempted to distinguish granulomas from malignancies using quantitative radiomics or computerized feature-based analysis as the main concept in DL approaches, which is to expose the network to every possible combination of imaging acquisition parameters.

It is imperative to look further into computer-aided diagnosis to develop novel, accurate approaches to aid in monitoring individuals with pulmonary nodules and to allow for safe and cost-effective diagnosis while preventing unnecessary procedures in cases of benign growths. In this study, 2 modern deep learning models that take advantage of transfer learning and use thin slices and noncontrast CT images were designed for nodule classification. Thus, the model could potentially provide a better prediction of the clinical outcomes of lung nodules, even for very small nodules, intending to considerably improve patient prognosis.

Material and Methods

PATIENTS:

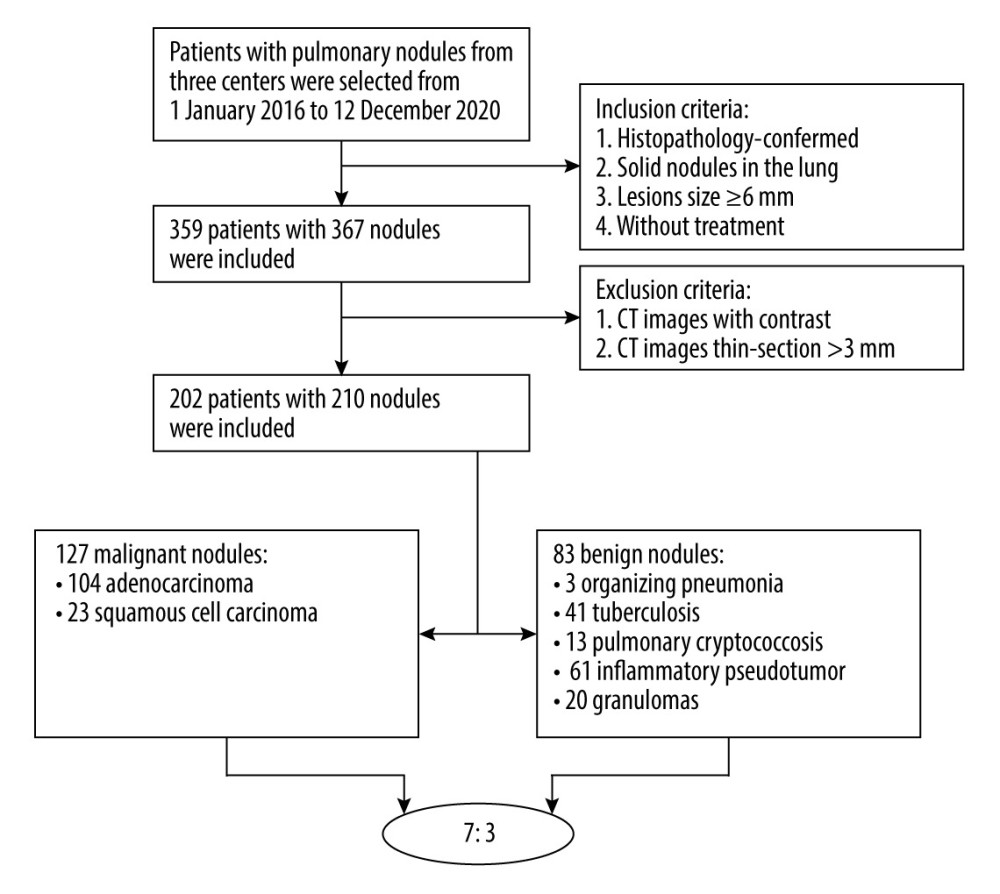

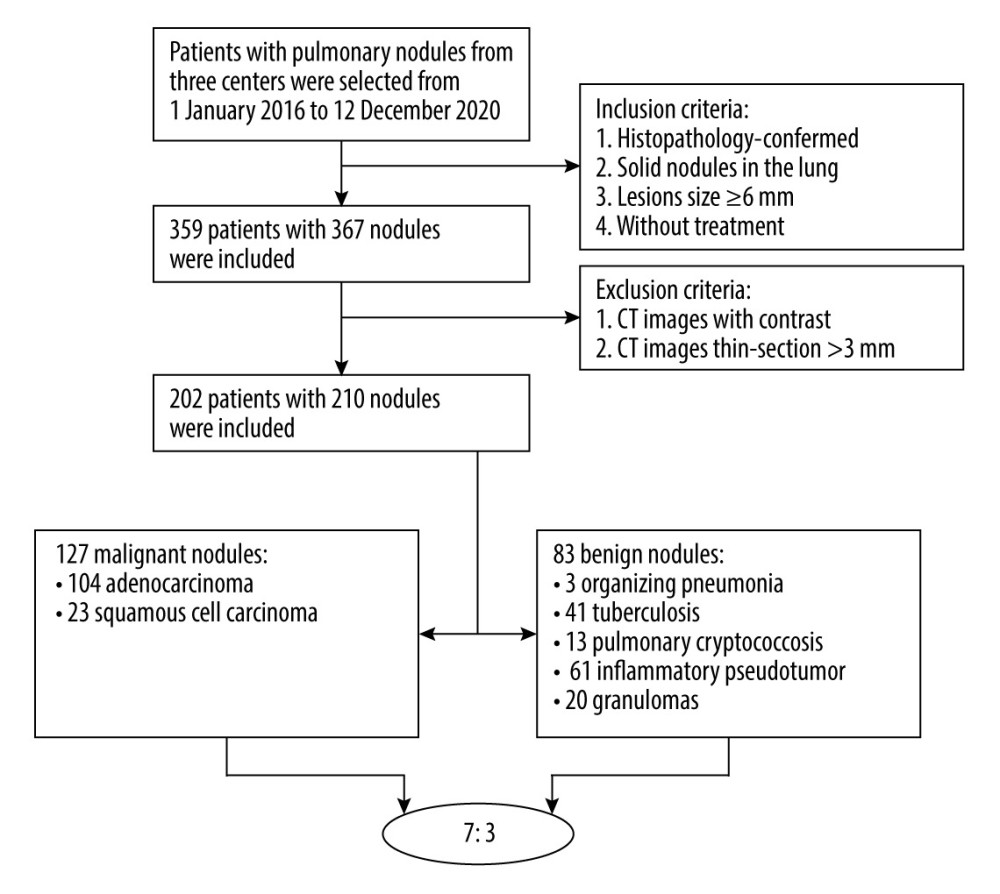

First, we retrospectively reviewed the electronic pathological records of patients with solid nodules in the lung between January 2016 and December 2020. The inclusion criteria were: 1) histopathology-confirmed, 2) solid nodule in the lung, 3) lesion size ≥6 mm in axial CT images, and 4) without treatment. Then, 359 patients with 367 nodules were included. The exclusion criteria were: 1) CT images with thin slices measuring more than 3 mm and 2) CT images taken with contrast medium. Finally, a total of 202 patients (125 males and 77 females, age range: 24–83 years) were enrolled, including 127 malignant (104 adenocarcinoma and 23 squamous cell carcinoma) and 83 benign (3 organizing pneumonia, 41 tuberculosis, 13 pulmonary cryptococcosis, 6 inflammatory pseudotumors, and 20 granulomas) nodules. As the sources of nodule datasets, we collected data on 73 patients (49 males, 24 females) with 78 nodules from Nanjing First Hospital. In this cohort, 55 malignant and 23 benign nodules were included. Then, were collected data on 98 patients (69 males, 29 females) with 98 nodules from Gaochun People Hospital, which contained 72 malignant and 26 benign nodules. Finally, we included 31 patients (3 males and 28 females) with 34 benign nodules from Nanjing Second Hospital.

Categorical clinical characteristics included sex (male or female), age (mean SD), nodule size (0.6–1.0 cm, 1.0–3.0 cm, and >3.0 cm), location, lobulation (absent/present), and spiculation (absent/present). The workflow is shown in Figure 1.

CT IMAGE ACQUISITION PARAMETERS:

This was a multi-center study. CT examinations without contrast medium were acquired from Nanjing First Hospital and Nanjing Second Hospital using Philips Ingenuity CT Core 128 scanners (Philips Medical Systems, Haifa, Matam) and Philips Brilliance 64 CT scanners (Philips Medical Systems, Jiangsu, China), while images taken with GE LightSpeed RT16 CT scanners (GE Medical Systems, Milwaukee, WI, USA) were acquired from Gaochun People’s Hospital. All CT scans were performed with a fixed-tube voltage of 120 kVp and X-ray tube current exposure time of 30–300 mAs. The pixel spacing of the CT image ranged from 0.625 to 0.843 mm depending on patient size, and the reconstruction slice thickness was 1 mm or 3 mm. The slice images were reconstructed with a matrix of 512×512 pixels.

Two thoracic radiologists (Li and Wu, with 5 and 10 years of experience in chest image interpretation, respectively) who were blinded to the results independently reviewed all CT images on the Picture Archiving and Communication Systems (PACS) and reached a consensus by discussion in case of disagreement. Lesion size, spiculation, and lobulation were evaluated as CT morphological features.

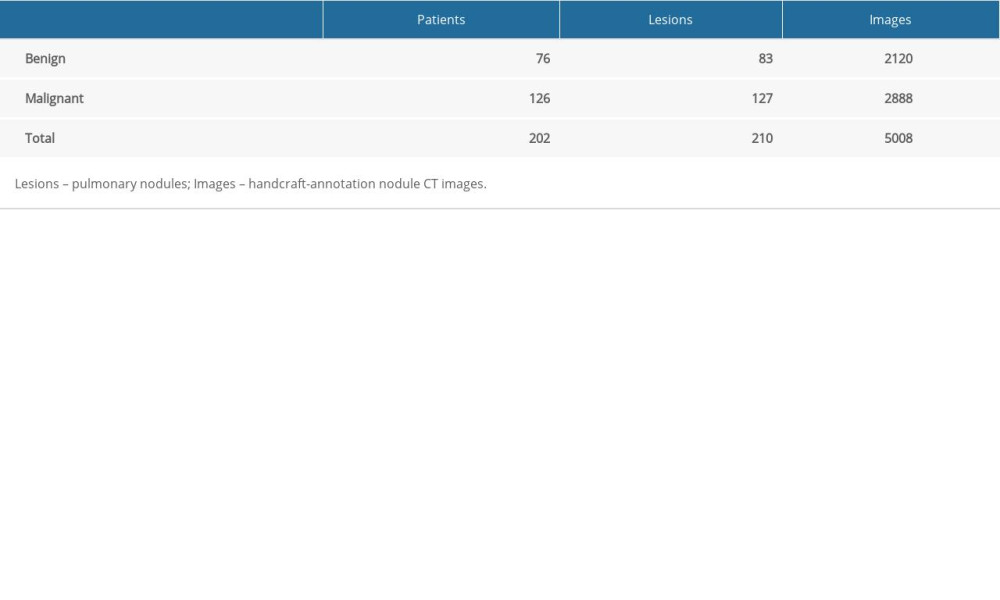

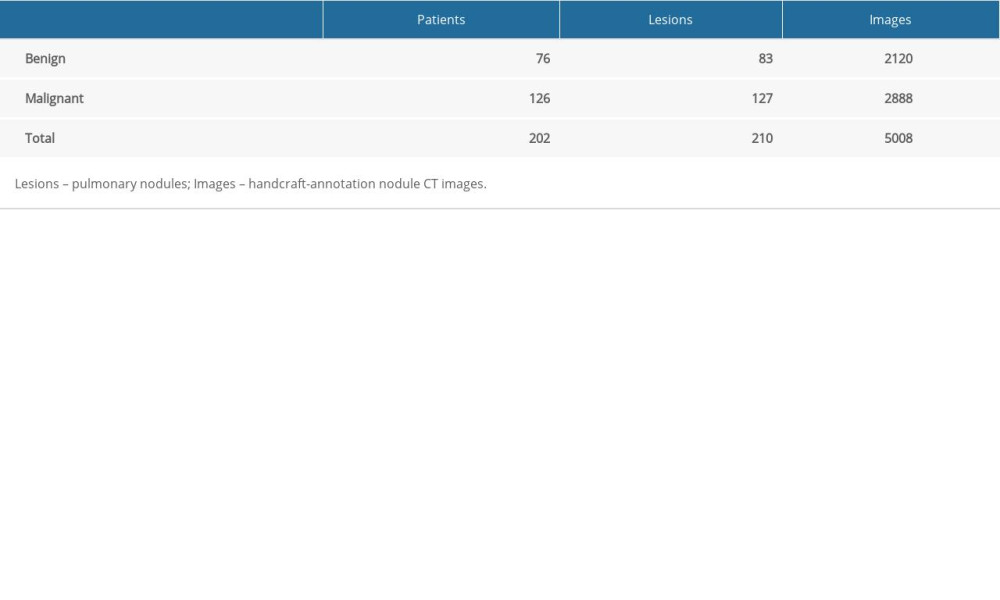

CNN INPUT IMAGE ANNOTATION:

The thin-slice and noncontrast images were downloaded from PACS and stored in DICOM format, and the window width and level were set at 750 HU and −500 HU, respectively. The images were subsequently imported into ITK-SNAP software (version 3.8.0 http://fsf.org/), and ROIs for the 3D images were determined layer-by-layer by 2 thoracic radiologists. The dataset of lesions and handcrafted annotation CT images is shown in Table 1.

ESTABLISHMENT OF A MODEL:

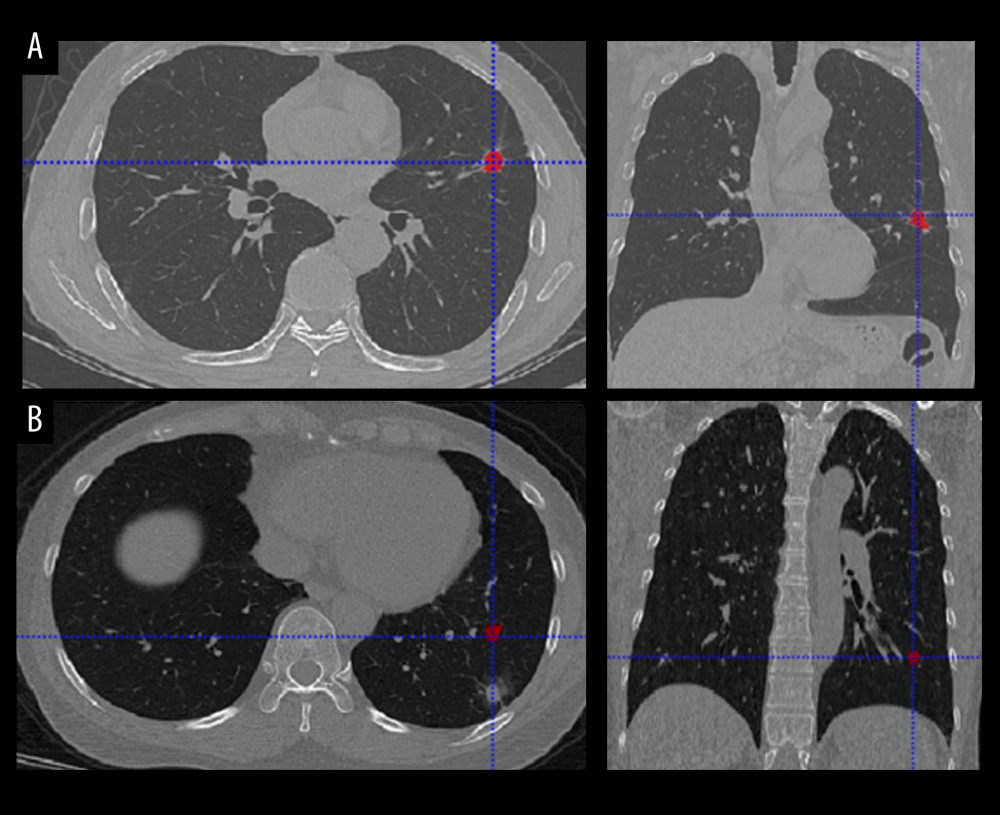

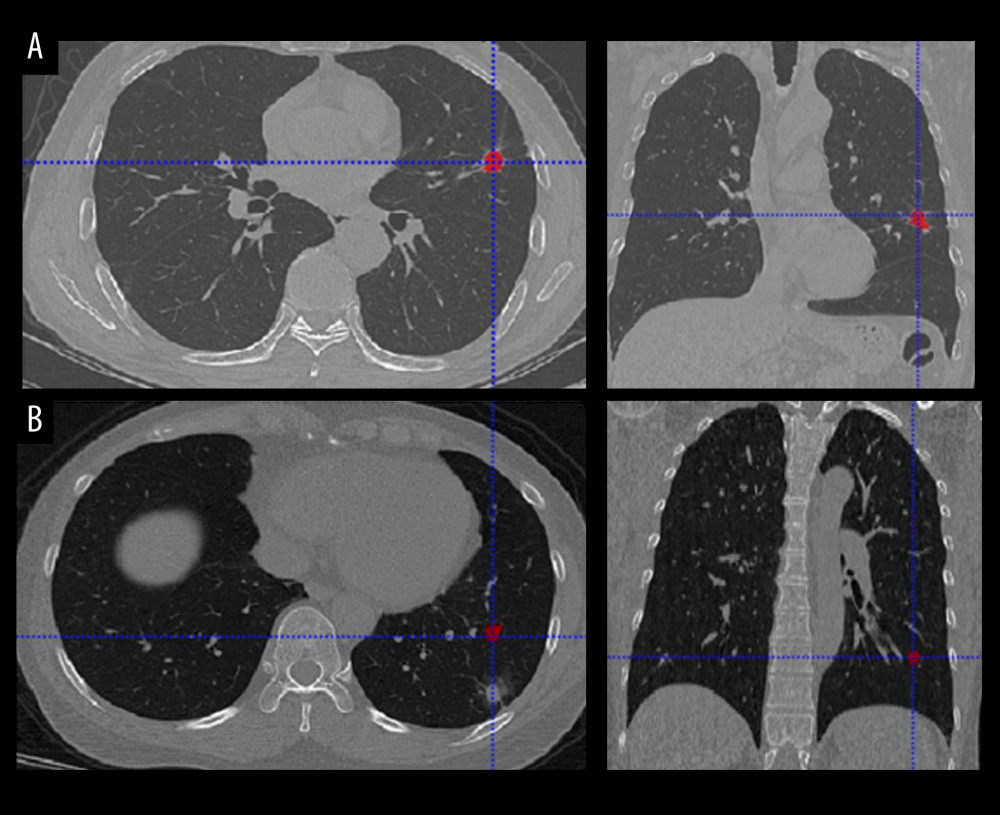

Two deep learning models (Inception V3 and ResNet50) were trained and optimized to distinguish between benign and malignant lung nodules. We set 2 nodes (benign/malignant) in the SoftMax layer, and the other structures of the models remained the same. All models were pretrained on ImageNet [34], which includes 300 000 000 natural images and trained with the combined set of training images from both datasets. Five-fold cross-validation was used to choose the optimal model. A total of 147 lesions were randomized into 5 equal subgroups, and 63 lesions were included in the test group. Five different combinations of subsets were analyzed. Finally, the result of each subset and the mean result of all subsets were reported. CT images with discordant interpretations made by the deep learning models and radiologists are shown in Figure 2.

STATISTICAL ANALYSIS:

SPSS (v.25.0; IBM) software was used to analyze the clinical characteristic data. The χ2 test was used to analyze the statistical significance of these qualitative data, including sex and lesion location, lobulation, and spiculation. An independent

The performance of the proposed models for nodule classification was evaluated according to various statistical measures such as sensitivity, specificity, accuracy, and area under the receiver operative curve (AUC). AUC values ranged from 0.5 to 1. The Delong test was used to compare the differences. A

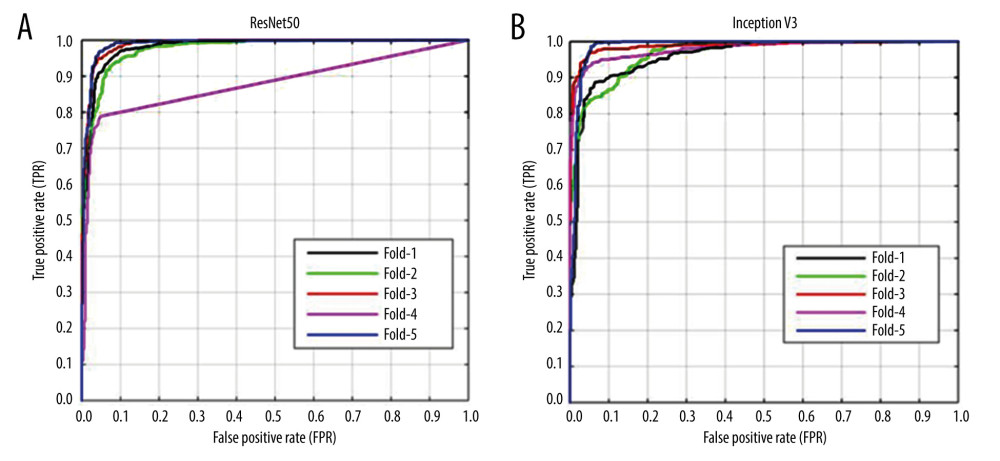

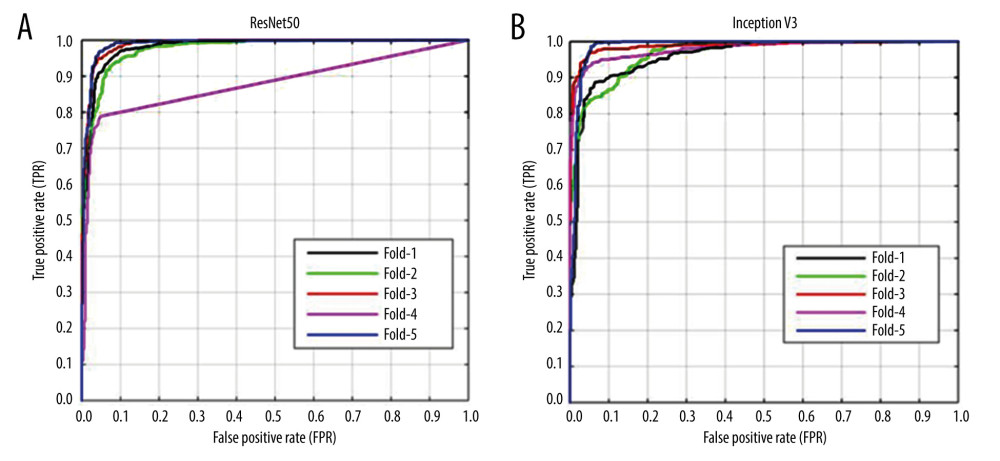

A receiver operator characteristic (ROC) curve, a graphical technique for describing and comparing the accuracy of diagnostic tests, was used to evaluate the sensitivity and specificity of the models. The matplotlib was used to generate the ROC curves.

Results

CLINICAL CHARACTERISTICS AND SUBJECTIVE CT FEATURES OF NODULES:

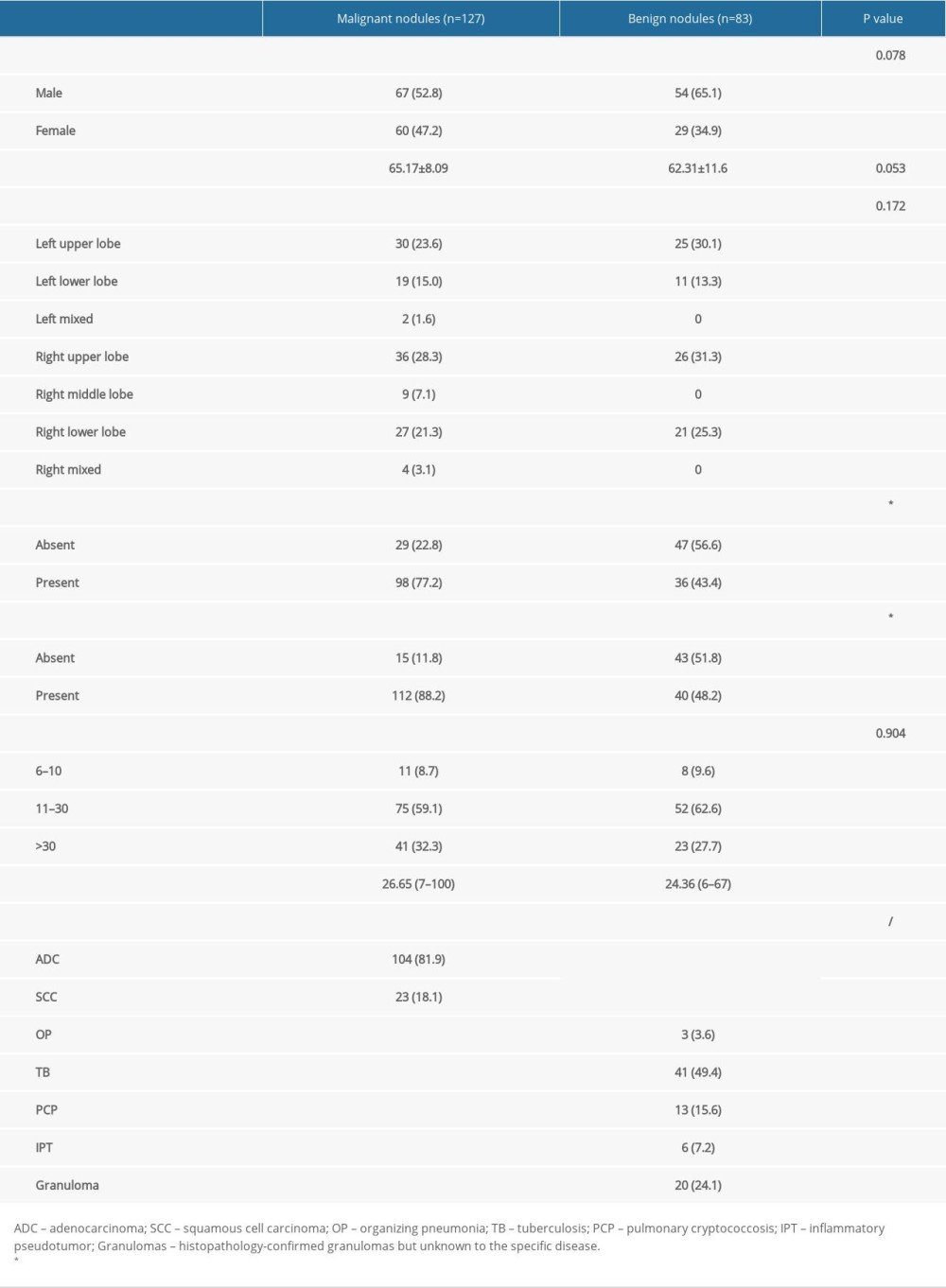

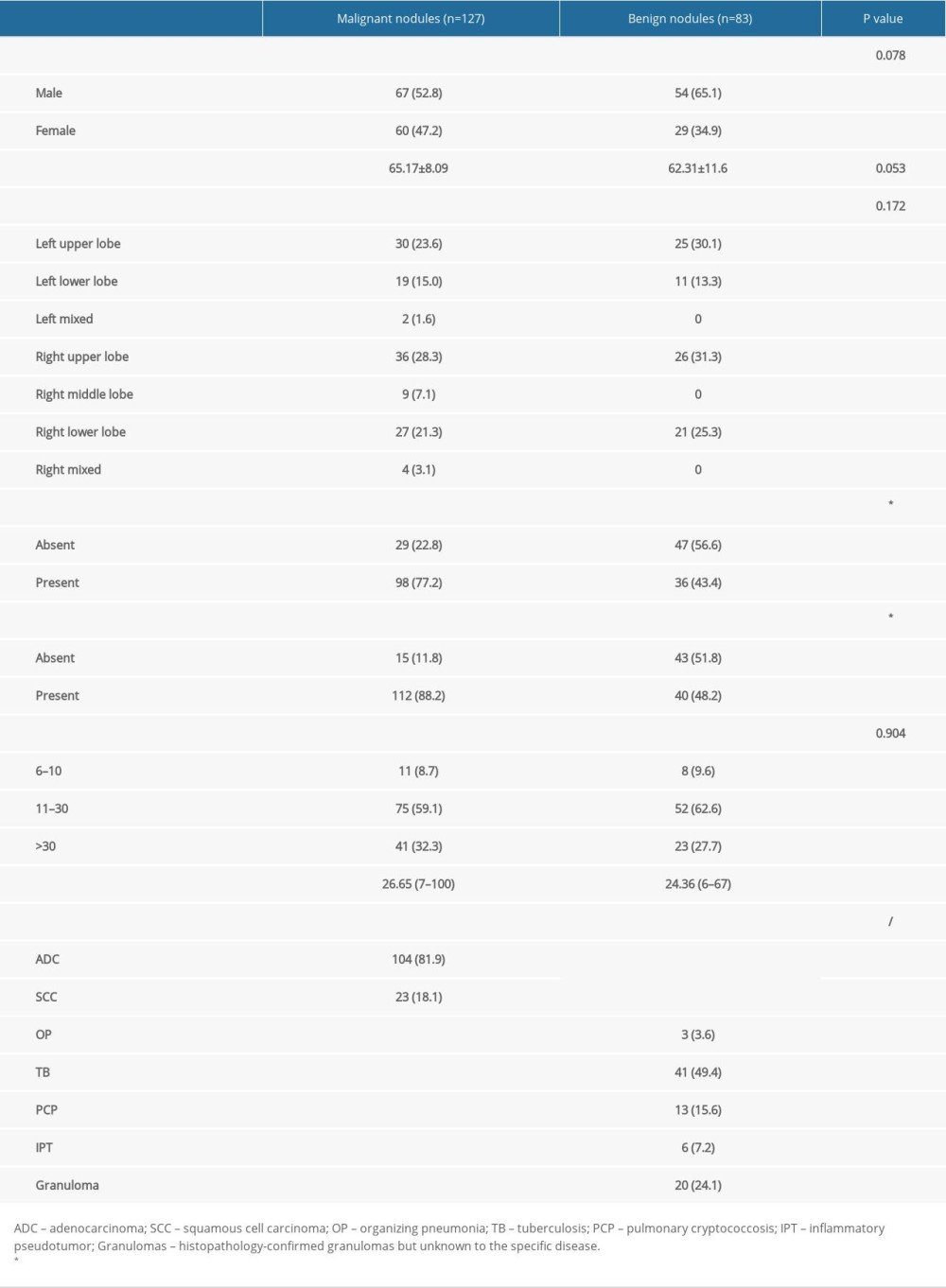

In this study, 28.3% of the malignant nodules and 31.3% of the benign nodules were located in the right upper lobe. A high proportion of nodules (59.1% of malignant and 62.6% of benign) measured between 11 cm and 30 cm. Spiculation was found in 48.2% of benign nodules and lobulation was found in 43.4%. There was a significant difference between the malignant and benign nodules in terms of subjective CT features, including spiculation and lobulation (P<0.001), whereas age and sex showed no difference (P>0.05). The patient characteristics are presented in Table 2.

EVALUATING PERFORMANCE OF THE MODELS:

We separately developed the Inception V3 model based on image level and lesion level. The performance is shown in Table 3. There was no significance among the 2 models in the training group and the test group (P>0.05). The ResNet50 model was built based on image level, and we compared the results between the Inception V3 model and ResNet50 model (Table 4). The ROC curve of the 2 models exhibited a high prediction ability when the true positive rate was close to 1 (Figure 3). There was no significant difference among the 2 models in the training group and the test group (P>0.05). In addition, the diagnostic accuracy of the Inception V3 and ResNet50 models were very high and Fisher’s exact test showed no significant difference (P>0.05).

Discussion

Herein, we developed and validated a new transfer learning model based on thinner slice and noncontrast CT images, which is a noninvasive diagnostic tool for differentiating between malignant nodules and benign lung nodules.

Detection and characterization of pulmonary nodules is an important issue since it is the first step in early lung cancer diagnosis. In our study, the clinical characteristics and radiographic features (lobulation and spiculation) were more common in the lung cancer group. Our results agree with several previous studies reporting that lung cancer more commonly affects the upper lobes, especially the right upper lobe [35]. Nodule size is also a primary determinant of malignancy [36], and the guidelines specify that a suspicious nodule with a diameter larger than 6 mm has a risk of malignancy [4]. Therefore, the minimum size of nodules in our study was 7 mm, which was smaller than in previous studies. Additionally, our dataset included many subtypes of benign nodules, such as organizing pneumonia, pulmonary cryptococcosis, and inflammatory pseudotumors, which are hard to distinguish from lung cancer in terms of morphology.

DL has shown strong performance in the medical field since it can be trained end-to-end in a supervised method while learning highly discriminative image features [37,38]. There is also a growing body of research on predicting pulmonary nodules [39–42], even lymph metastasis, and it has shown great potential in evaluating the survival rate [30,43]. Our one-classifier construction was Inception [15], which is being studied more often because the multiscale features of input have different CT features. The other model was ResNet [44], which can effectively help to compensate for the loss of small-nodule details. Therefore, we built the models at the image level (5008 images) instead of at the lesion-level only (210 lesions), which avoided having too few training examples to learn a full deep representation. Meanwhile, unlike the radiomics classifier [45], the CNN model can automatically extract high-throughput features instead of features calculated from segmented objects as input information, thus avoiding the complicated process of artificial feature extraction [46].

In our study, the InceptionV3 model based on lesion level exhibited good performance, with AUCs of 0.998 in the training set and 0.999 in the validation set, and the accuracy was 0.984 and 0.989, which is higher than in previous studies [10,29,47], whose AUCs were 0.86–0.94 and diagnostic accuracies were 0.84–0.96. The AUCs and diagnostic accuracy of the InceptionV3 model (image-level) was 0.977 and 0.933, which is higher than that of the ResNet50 model (image-level). PET/CT is also good at discriminating nodules. Lai [48] built a model based on PET/CT and achieved a lower accuracy of 0.79. In addition, PET/CT is expensive and time consuming. Thus, we prefer to build the models based on CT, which has better performance and is more beneficial than conventional CT diagnostic methods for patients who cannot tolerate contrast enhancement agents due to allergies or renal failure.

Lesion segmentation is the most challenging aspect of model evaluation [49,50], and we used expert image annotation, which can be an effective way to optimize the model. Referring to earlier lung nodule classification studies, our model takes the effect of CT scanning parameters, including image thickness and contrast medium injection, into consideration because biological heterogeneity within the tumor can be detected and described with plain-phase CT images. However, this phenomenon may be confused by the intravenous contrast agents of existing intratumor contrast agents. Therefore, the present study both simplifies the process and greatly improves the accuracy and specificity.

Our study has some limitations. First, this was a retrospective study and thus had inherent selection bias. Second, the sample we selected was small because of strict inclusion criteria, which could easily have caused a data imbalance. To reduce the bias risk caused by samples with more patients with malignant nodules than those with benign nodules, during the training process, the benign dataset was dynamically oversampled at the lesion level and image level [51]. Second, the study was also limited to discrimination between benign and malignant nodules and did not thoroughly identify benign nodules such as OP and TB. Third, use of different CT scanners may have affected the evaluation of some CT results caused by a partial-volume effect.

Conclusions

In conclusion, we built a novel transfer learning model with high accuracy in distinguishing malignant vs benign lung nodules. It can provide added diagnostic value to differentiate lung nodules and reduce the need for invasive diagnostic procedures, and it may assist clinicians in creating personalized treatment strategies and choosing the optimal intervention.

Figures

Figure 1. The workflow of the collection of patients. Created using Microsoft Office Visio 2016, China.

Figure 1. The workflow of the collection of patients. Created using Microsoft Office Visio 2016, China.  Figure 2. CT images with discordant interpretations between deep learning models and radiologists. (A) Model False-Positives: a 63-year-old male patient with histologically-confirmed benign nodule when the model predicts a malignant nodule. (B) Model False-Negatives: a 47-year-old male patient with histological-confirmed malignant nodule when the model predicts a benign nodule.

Figure 2. CT images with discordant interpretations between deep learning models and radiologists. (A) Model False-Positives: a 63-year-old male patient with histologically-confirmed benign nodule when the model predicts a malignant nodule. (B) Model False-Negatives: a 47-year-old male patient with histological-confirmed malignant nodule when the model predicts a benign nodule.  Figure 3. (A, B) Receiver operating characteristic (ROC) curves for methods to predict pulmonary nodules of the 2 transfer learning models (ResNet50 and Inception V3) (image-level). The x-axis represents the false-positive rate (FPR) and the y-axis represents the true-positive rate (TPR). Created using matplotlib version 2.2.2 (Python 3.6).

Figure 3. (A, B) Receiver operating characteristic (ROC) curves for methods to predict pulmonary nodules of the 2 transfer learning models (ResNet50 and Inception V3) (image-level). The x-axis represents the false-positive rate (FPR) and the y-axis represents the true-positive rate (TPR). Created using matplotlib version 2.2.2 (Python 3.6). References

1. Jemal A, Bray F, Center MM, Global cancer statistics: Cancer J Clin, 2011; 61(2); 69-90

2. Valente IR, Cortez PC, Neto EC, Automatic 3D pulmonary nodule detection in CT images: A survey: Comput Methods Programs Biomed, 2016; 124; 91-107

3. Lung-RADS ACoRCo: Lung-RADS Assessment Categories version 1.1

4. MacMahon H, Naidich DP, Goo JM, Guidelines for management of incidental pulmonary nodules detected on CT images: From the Fleischner Society 2017: Radiology, 2017; 284(1); 228-43

5. Carter S, Barratt A, What is overdiagnosis and why should we take it seriously in cancer screening?: Public Health Res Pract, 2017; 27(3); 2731722

6. Patz E, Pinsky P, Gatsonis C, Overdiagnosis in low-dose computed tomography screening for lung cancer: JAMA Intern Med, 2014; 174(2); 269-74

7. Yuan X, Zhang J, Quan C, Differentiation of malignant and benign pulmonary nodules with first-pass dual-input perfusion CT: Eur Radiol, 2013; 23(9); 2469-74

8. Shan F, Zhang Z, Xing W, Differentiation between malignant and benign solitary pulmonary nodules: use of volume first-pass perfusion and combined with routine computed tomography: Eur J Radiol, 2012; 81(11); 3598-605

9. Hawkins S, Wang H, Liu Y, Predicting malignant nodules from screening CT scans: J Thorac Oncol, 2016; 11(12); 2120-28

10. Chen CH, Chang CK, Tu CY, Radiomic features analysis in computed tomography images of lung nodule classification: PLoS One, 2018; 13(2); e0192002

11. Meng F, Guo Y, Li M, Radiomics nomogram: A noninvasive tool for preoperative evaluation of the invasiveness of pulmonary adenocarcinomas manifesting as ground-glass nodules: Transl Oncol, 2021; 14(1); 100936

12. Budd S, Robinson EC, Kainz B, A survey on active learning and human-in-the-loop deep learning for medical image analysis: Med Image Anal, 2021; 71; 102062

13. Liu J, Gong M, He H, Deep associative neural network for associative memory based on unsupervised representation learning: Neural Netw, 2019; 113; 41-53

14. Litjens G, Kooi T, Bejnordi B, A survey on deep learning in medical image analysis: Med Image Anal, 2017; 42; 60-88

15. Wang X, Mao K, Wang L, An appraisal of lung nodules automatic classification algorithms for CT images: Sensors (Basel), 2019; 19(1); 194

16. Coudray N, Ocampo P, Sakellaropoulos T, Classification and mutation prediction from non-small cell lung cancer histopathology images using deep learning: Nat Med, 2018; 24(10); 1559-67

17. Zhang P, Ma Z, Zhang Y, Chen X, Wang GJG, Improved Inception V3 method and its effect on Radiologists’ performance of tumor classification with automated breast ultrasound system: Gland Surg, 2021; 10(7); 2232-45

18. Sridhar S, Amutharaj J, Valsalan P, A Torn ACL mapping in knee MRI images using deep convolution neural network with Inception-v3: J Healthc Eng, 2022; 2022 7872500

19. Sun H, Wang A, Wang W, Liu CJS, An improved deep residual network prediction model for the early diagnosis of Alzheimer’s disease: Sensors (Basel), 2021; 21(12); 4182

20. Harangi B, Baran A, Hajdu A, Classification of skin lesions using an ensemble of deep neural networks: Annu Int Conf IEEE Eng Med Biol Soc, 2018; 2018; 2575-78

21. Lee S, Rim B, Jou S, Deep-learning-based coronary artery calcium detection from CT image: Sensors (Basel), 2021; 21(21); 7059

22. Shen W, Zhou M, Yang F, Multi-scale convolutional neural networks for lung nodule classification: Inf Process Med Imaging, 2015; 24; 588-99

23. Lyu J, Bi X, Ling SH, Multi-level cross residual network for lung nodule classification: Sensors (Basel), 2020; 20(10); 2837

24. Gu Y, Lu X, Yang L, Automatic lung nodule detection using a 3D deep convolutional neural network combined with a multi-scale prediction strategy in chest CTs: Comput Biol Med, 2018; 103; 220-31

25. Nasrullah N, Sang J, Alam MS, Automated lung nodule detection and classification using deep learning combined with multiple strategies: Sensors (Basel), 2019; 19(17); 3722

26. Khorrami M, Bera K, Leo P, Stable and discriminating radiomic predictor of recurrence in early stage non-small cell lung cancer: Multi-site study: Lung Cancer, 2020; 142; 90-97

27. Buch K, Li B, Qureshi M, Quantitative assessment of variation in CT parameters on texture features: Pilot study using a nonanatomic phantom: Am J Neuroradiol, 2017; 38(5); 981-85

28. He L, Huang Y, Ma Z, Effects of contrast-enhancement, reconstruction slice thickness and convolution kernel on the diagnostic performance of radiomics signature in solitary pulmonary nodule: Sci Rep, 2016; 6; 34921

29. Dilger S, Uthoff J, Judisch A, Improved pulmonary nodule classification utilizing quantitative lung parenchyma features: J Med Imaging (Bellingham), 2015; 2(4); 041004

30. Dou TH, Coroller TP, van Griethuysen JJM, Peritumoral radiomics features predict distant metastasis in locally advanced NSCLC: PLoS One, 2018; 13(11); e0206108

31. Beig N, Khorrami M, Alilou M, Perinodular and intranodular radiomic features on lung CT images distinguish adenocarcinomas from granulomas: Radiology, 2019; 290(3); 783-92

32. Lin X, Jiao H, Pang Z, Lung cancer and granuloma identification using a deep learning model to extract 3-dimensional radiomics features in CT imaging: Clin Lung Cancer, 2021; 22(5); e756-66

33. Khorrami M, Bera K, Thawani R, Distinguishing granulomas from adenocarcinomas by integrating stable and discriminating radiomic features on non-contrast computed tomography scans: Eur J Cancer, 2021; 148; 146-58

34. Morid MA, Borjali A, Del Fiol G, A scoping review of transfer learning research on medical image analysis using ImageNet: Comput Biol Med, 2021; 128; 104115

35. Walter J, Heuvelmans M, Bock G, Characteristics of new solid nodules detected in incidence screening rounds of low-dose CT lung cancer screening: The NELSON study: Thorax, 2018; 73(8); 741-47

36. Li C, Liao J, Cheng B, Lung cancers and pulmonary nodules detected by computed tomography scan: A population-level analysis of screening cohorts: Ann Transl Med, 2021; 9(5); 372

37. Avanzo M, Stancanello J, Pirrone G, Sartor G, Radiomics and deep learning in lung cancer: Strahlenther Onkol, 2020; 196(10); 879-87

38. Gong J, Liu J, Hao W, A deep residual learning network for predicting lung adenocarcinoma manifesting as ground-glass nodule on CT images: Eur Radiol, 2020; 30(4); 1847-55

39. Mao L, Chen H, Liang M, Quantitative radiomic model for predicting malignancy of small solid pulmonary nodules detected by low-dose CT screening: Quant Imaging Med Surg, 2019; 9(2); 263-72

40. Heuvelmans MA, van Ooijen PMA, Ather S, Lung cancer prediction by Deep Learning to identify benign lung nodules: Lung Cancer, 2021; 154; 1-4

41. Uthoff J, Nagpal P, Sanchez R, Differentiation of non-small cell lung cancer and histoplasmosis pulmonary nodules: Insights from radiomics model performance compared with clinician observers: Transl Lung Cancer Res, 2019; 8(6); 979-88

42. Hu X, Gong J, Zhou W, Computer-aided diagnosis of ground glass pulmonary nodule by fusing deep learning and radiomics features: Phys Med Biol, 2021; 66(6); 065015

43. He B, Dong D, She Y, Predicting response to immunotherapy in advanced non-small-cell lung cancer using tumor mutational burden radiomic biomarker: J Immunother Cancer, 2020; 8(2); e000550

44. Hong Y, Pan H, Jia Y, ResDNet: Efficient dense multi-scale representations with residual learning for high-level vision tasks: IEEE Trans Neural Netw Learn Syst, 2022 PP [Online ahead of print]

45. Wang J, Liu X, Dong D, Prediction of malignant and benign of lung tumor using a quantitative radiomic method: Annu Int Conf IEEE Eng Med Biol Soc, 2016; 2016; 1272-75

46. Sarıgül M, Ozyildirim B, Avci M, Differential convolutional neural network: Neural Netw, 2019; 116; 279-87

47. Arevalo J, Gonzalez F, Ramos-Pollan R, Convolutional neural networks for mammography mass lesion classification: Annu Int Conf IEEE Eng Med Biol Soc, 2015; 2015; 797-800

48. Lai Y, Wu K, Tseng N, Differentiation between malignant and benign pulmonary nodules by using automated three-dimensional high-resolution representation learning with fluorodeoxyglucose positron emission tomography-computed tomography: Front Med (Lausanne), 2022; 9; 773041

49. Gillies R, Kinahan P, Hricak HJR, Radiomics: Images Are more than pictures, they are data: Radiology, 2016; 278(2); 563-77

50. Majkowska A, Mittal S, Steiner DF, Chest radiograph interpretation with deep learning models: Assessment with radiologist-adjudicated reference standards and population-adjusted evaluation: Radiology, 2020; 294(2); 421-31

51. Zhou L, Zhang Z, Chen YC, A deep learning-based radiomics model for differentiating benign and malignant renal tumors: Transl Oncol, 2019; 12(2); 292-300

Figures

Figure 1. The workflow of the collection of patients. Created using Microsoft Office Visio 2016, China.

Figure 1. The workflow of the collection of patients. Created using Microsoft Office Visio 2016, China. Figure 2. CT images with discordant interpretations between deep learning models and radiologists. (A) Model False-Positives: a 63-year-old male patient with histologically-confirmed benign nodule when the model predicts a malignant nodule. (B) Model False-Negatives: a 47-year-old male patient with histological-confirmed malignant nodule when the model predicts a benign nodule.

Figure 2. CT images with discordant interpretations between deep learning models and radiologists. (A) Model False-Positives: a 63-year-old male patient with histologically-confirmed benign nodule when the model predicts a malignant nodule. (B) Model False-Negatives: a 47-year-old male patient with histological-confirmed malignant nodule when the model predicts a benign nodule. Figure 3. (A, B) Receiver operating characteristic (ROC) curves for methods to predict pulmonary nodules of the 2 transfer learning models (ResNet50 and Inception V3) (image-level). The x-axis represents the false-positive rate (FPR) and the y-axis represents the true-positive rate (TPR). Created using matplotlib version 2.2.2 (Python 3.6).

Figure 3. (A, B) Receiver operating characteristic (ROC) curves for methods to predict pulmonary nodules of the 2 transfer learning models (ResNet50 and Inception V3) (image-level). The x-axis represents the false-positive rate (FPR) and the y-axis represents the true-positive rate (TPR). Created using matplotlib version 2.2.2 (Python 3.6). Tables

Table 1. Image data of patients.

Table 1. Image data of patients. Table 2. Characteristics of solid nodules in the lung.

Table 2. Characteristics of solid nodules in the lung. Table 3. Results of Inception V3 model.

Table 3. Results of Inception V3 model. Table 4. Five-fold cross-validation results of InceptionV3 and ResNet50 models (image-level).

Table 4. Five-fold cross-validation results of InceptionV3 and ResNet50 models (image-level). Table 1. Image data of patients.

Table 1. Image data of patients. Table 2. Characteristics of solid nodules in the lung.

Table 2. Characteristics of solid nodules in the lung. Table 3. Results of Inception V3 model.

Table 3. Results of Inception V3 model. Table 4. Five-fold cross-validation results of InceptionV3 and ResNet50 models (image-level).

Table 4. Five-fold cross-validation results of InceptionV3 and ResNet50 models (image-level). In Press

Clinical Research

Institutional and Regional Variations in Access to Clinical Trials and Next-Generation Sequencing in Turkis...Med Sci Monit In Press; DOI: 10.12659/MSM.951027

Clinical Research

Low-Intensity Blood Flow-Restricted Multi-Joint Exercise Improves Muscle Function in Patients With Patellof...Med Sci Monit In Press; DOI: 10.12659/MSM.950516

Review article

Musculoskeletal Ultrasound and MRI in the Evaluation of Chemotherapy-Induced Peripheral Neuropathy: A ReviewMed Sci Monit In Press; DOI: 10.12659/MSM.951283

Clinical Research

Sensory Processing, Dissociation, and Affective Symptoms in Misophonia: A Cross-Sectional Study of 35 AdultsMed Sci Monit In Press; DOI: 10.12659/MSM.950938

Most Viewed Current Articles

17 Jan 2024 : Review article 10,187,196

Vaccination Guidelines for Pregnant Women: Addressing COVID-19 and the Omicron VariantDOI :10.12659/MSM.942799

Med Sci Monit 2024; 30:e942799

13 Nov 2021 : Clinical Research 3,708,487

Acceptance of COVID-19 Vaccination and Its Associated Factors Among Cancer Patients Attending the Oncology ...DOI :10.12659/MSM.932788

Med Sci Monit 2021; 27:e932788

14 Dec 2022 : Clinical Research 2,341,643

Prevalence and Variability of Allergen-Specific Immunoglobulin E in Patients with Elevated Tryptase LevelsDOI :10.12659/MSM.937990

Med Sci Monit 2022; 28:e937990

16 May 2023 : Clinical Research 706,524

Electrophysiological Testing for an Auditory Processing Disorder and Reading Performance in 54 School Stude...DOI :10.12659/MSM.940387

Med Sci Monit 2023; 29:e940387